Detect hallucinations before

your customers do.

You've tried Langfuse, maybe an AI evaluation course. You feel the manual approach doesn't scale. Use Scorable to stop your AI from hallucinating.

No sign up required. 100 free evaluations/day.

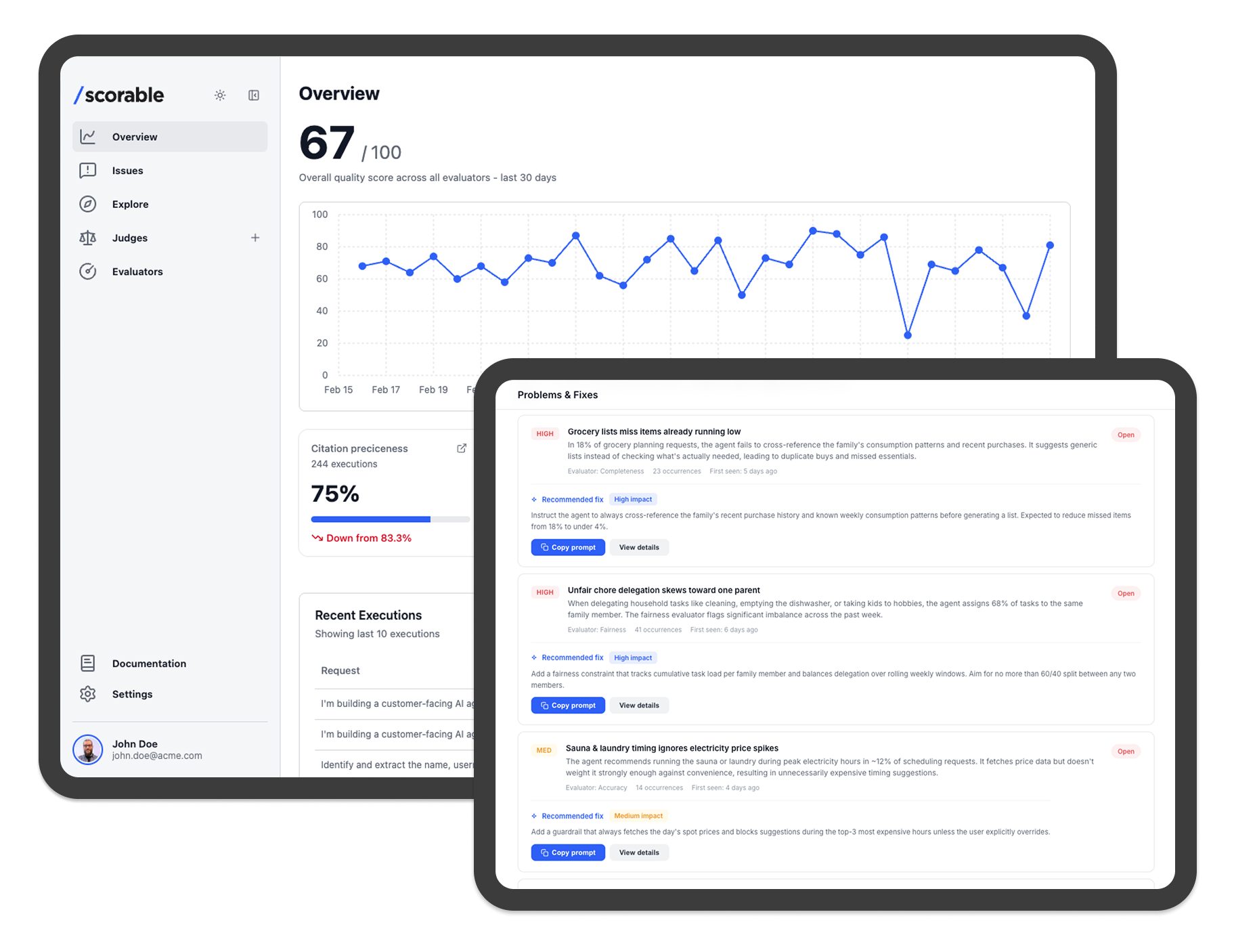

Know what your AI is doing with a single glance.

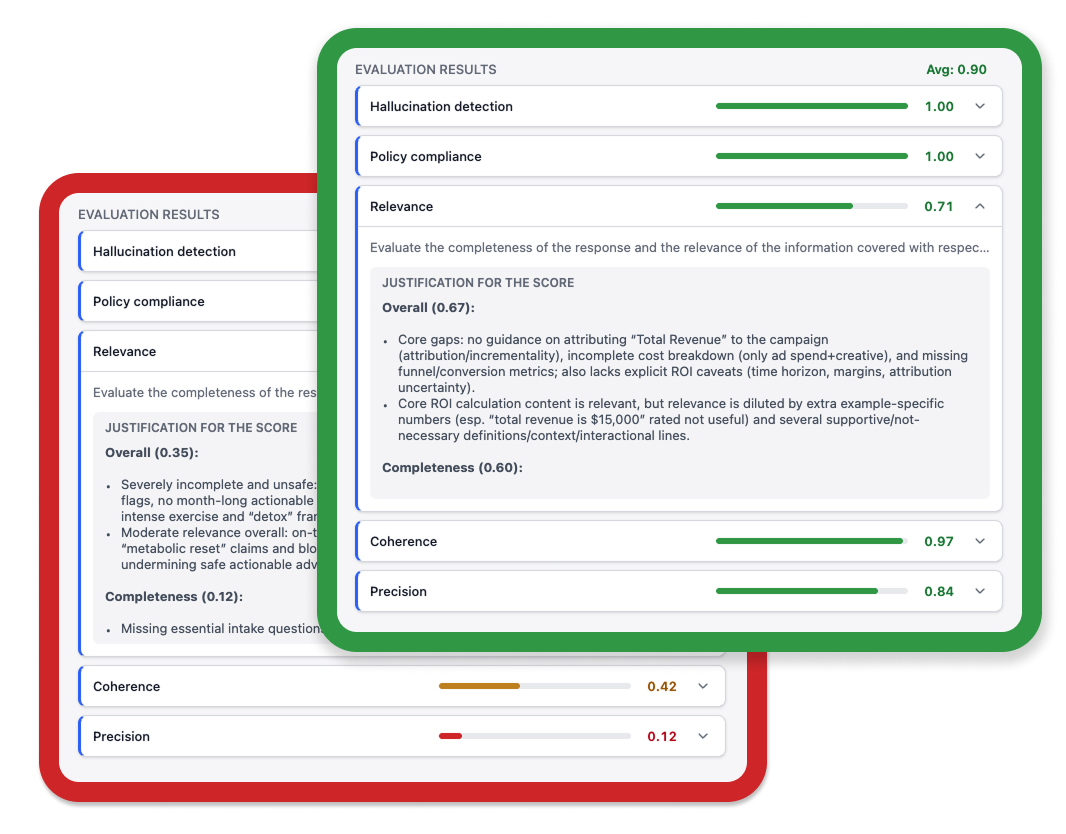

Scorable scores every AI response with a plain-language justification. No digging through logs. No waiting for a user complaint. Just a clear picture of what your AI is doing, right now.

Catch policy violations before users see them

Proxy mode evaluates responses in-flight and blocks or rectifies non-compliant outputs.

Audit trail ready for compliance review

Every evaluation logged with score, justification, and timestamp.

Know what was flagged and why

Not a black box. Plain-language explanation with every result.

Define policy in plain language

No evaluation engineering required. Describe the rule; Scorable builds the evaluator.

Identify bad behaviour and fix it.

When an evaluator flags a violation, you get the score, the reason, and the exact response that failed. Fix the prompt, update the policy, and verify the change. Documented governance, not reactive damage control.

One bad response is all it takes. Don't find out the hard way.

No credit card required. 100 free evaluations/day.