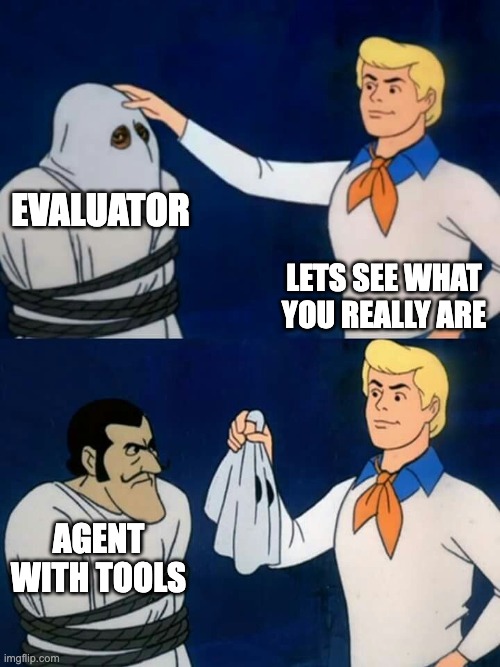

The feature which the Scorable team is the most proud of is the "Evaluator Factory" (the thing at our landing page). Before it has gotten to the point we are now, it was, to put it mildly, a mess. After several iterations and learnings from blunders, we arrived at a LLM powered automation which we can say is no-vibes.

As in: no blind prompt changes, no desperate "bro, please please return UUIDs correctly 🙏🙏🙏" (well, there is a bit of that).

Why an Evaluator Factory?

The emerging (though already quite popular) Pydantic AI library docs has a nice quote on evals:

Unlike unit tests, evals are an emerging art/science; anyone who claims to know for sure exactly how your evals should be defined can safely be ignored.

There's of course some truth to that. Evaluation is, a lot of the time, black magic. Or at least, it feels that way. It's an involved process, requiring context, nuance, and a deeper understanding of what your LLM-powered app is actually trying to do.

If that is so, how to make the evaluation process less black magic and more approachable?

It all starts with a discovery phase. And in that, we saw an opportunity to make improvements.

Before picking any evaluators or frameworks, we need to understand the use case. That's where the Evaluator Factory comes in: it's designed to first deeply understand a user's goal.

Its job is to help you understand the evaluation needs for your app. Not only that, but it gives you a baseline evaluator stack implementation—something you can immediately plug in and use to guardrail your app.

A simple intent such as I am building a chatbot for my webshop selling strawberries and I want to measure how well it answer questions about my inventory should be enough to get started for someone who is not deep into the weeds of evaluation.

Building from First Principles

While we had a library of existing evaluators, our first principle was to always start with the use case, not our library. The role of the Evaluator Factory, therefore, is to first understand the goal, and only then compose an evaluation stack. This involves either selecting the right tools from our library or, if no suitable tool exists, guiding the user to define a new, custom evaluator.

We started like always: with a quick-and-dirty PoC. But pretty soon, we asked ourselves: how do we do this right?

That meant defining the expected outcome first. In practice: writing the evaluation layer before—or at least in parallel with—the evaluator factory features it was meant to assess.

So evaluators to assess the evaluators, very meta.

Naturally, we built the evaluators using the Scorable platform and abstractions.

Evaluators are not useful at point in time verification. We track the Evaluator Factory continuously, both during development (whenever we change agent prompts, models, behaviors) and in production. That lets us observe trends over time: is the evaluator factory producing better evaluators? Or is its performance degrading?

This ties directly back to our "no-vibes" mandate. The Evaluator Factory is a measurable system now, not a "looks good to me 👍" system. Human eyeballing is always welcome, but we'd rather spend that time on things that don't scale.

An Eval Stack Isn't Complete Without Test Data

An evaluation stack is useless without data to run against it. That's why, when the Evaluator Factory generates a set of evaluators, it also generates a corresponding synthetic dataset specifically designed to test them, making it a cohesive package, not a separate feature.

Of course, generating realistic synthetic data isn't trivial. There are a host of startups working on synthetic data problems, and it's easy to see why.

You can start with a prompt like "You are a friendly synthetic data generator, create me synthetic data", but wow, there's a lot more to it. You need examples, steps, structure. Otherwise, your generator just creates noise.

We spent time tuning both the data generator and the data evaluator. Our goal: output synthetic data that was realistic enough to resemble actual use case, but simple enough that users could see the difference between good and bad inputs.

This connects back to our earlier claim: the Evaluator Factory isn't a chat interface with a clever system prompt. It acts like a good context engineer, squeezing as much detail out of the use case as possible.

If you give a vague description of your use case (I am building a chatbot), it evaluates and asks for more info. Which domain are you in? Do you have some policy documents, examples? It's more like a well defined form with a few appropriate questions than an open-ended chat.

Under the Hood

The pipeline is simple, really, as it should be. The heavy lifting is left to the LLMs.

Step 1: Preprocessing the Intent

We start by checking whether the user's intent even makes sense. We quickly learned to filter out overly broad intents like "Is my chatbot good?" The Evaluator Factory forces the user to be more specific, guiding them toward a measurable goal like, "Does my chatbot correctly answer questions based only on the provided documents?"

We use (naturally) a custom evaluator built with our own platform for that step. Yay, dogfooding!

Step 2: Assessing the App Shape

Is it a question/response flow, like a chatbot?

Or does it just output a block of content, like summarization or classification?

That information goes downstream and influences both the evaluator stack generation and the synthetic data agent.

Step 3: Evaluator & Synthetic Agent Orchestration

The orchestration agent uses the structured metadata from the previous steps to run a semantic search against our library of evaluator templates. If no match is found above a certain confidence score, it pivots to the custom-building flow.

Meanwhile, a separate agent constructs the synthetic data and its evaluator using the same metadata inferred from the use case description.

The custom evaluator building flow is a complicated beast in itself. To pick one detail here, we try to look for examples in the user inputs (files, descriptions) and if found, we have yet another reasonably complex agentic flow to infer a policy from them which is then used to create a policy-based evaluator.

Everything uses tool calls and structured outputs. We don't parse chat messages, not even for synthetic data. That is a trade-off we made. Traditional chat prompting would allow us to play with things like temperature or seed, which is sometimes useful.

To make things even more complicated, we also try to identify from the user inputs anything that we could ask more details about. This is to tackle the garbage in, garbage out problem—we can only get so far from limited context. This is probably the best thing about the pipeline: it is not a naive chat interface but rather it will guide the user through the iterative process of tuning the evaluator stack that is tailored to their specific needs.

flowchart TD A["User Input: Use Case Description"] --> B["Step 1: Intent Preprocessing: Validate Intent"] B --> C{Can we build an evaluator stack?} C -->|No| D["Request More Info: Guided Q&A"] C -->|Yes| E["Step 2: App Shape Assessment: Determine App Type"] D --> A E --> F{App Type?} F -->|Question/Response| G["Chatbot Flow"] F -->|Content Output| H["LLM Output only"] G --> I["Step 3: Orchestration: Parallel Processing"] H --> I I --> J["Evaluator Generation: Select from Scorable Library or Build Custom"] I --> K["Synthetic Data Generation: Create Test Data"] J --> L["Evaluator Stack: Performance Measurement"] K --> M["Synthetic Examples: Test Data for Validation"] L --> N["Complete Evaluation System"] M --> N style A fill:#e1f5fe style B fill:#f3e5f5 style E fill:#f3e5f5 style I fill:#f3e5f5 style J fill:#e8f5e8 style K fill:#e8f5e8 style N fill:#f1f8e9

The Tech Stack

We use libraries like Pydantic AI and LiteLLM to keep our LLM powered apps maintainable (we love strict typing), observable (we care about costs) and robust (yes, even Gemini APIs can be down, we need fallbacks).

With the release of Pydantic AI 1.0, we remain bullish on the library and its ecosystem. When it comes to almost anything else, half-time of the usefulness of a library in LLM space remains brief.

What matters is locking down behaviors that you care about. That's where the ground truth datasets and evaluators have their biggest impact, especially for us who offer it as a service.

If you want to skip the PoC phase we went through and get a baseline eval stack for your app right now, the Evaluator Factory is a good place to start.