We describe a design pattern and protocol that lets you bootstrap a maximally strong evaluation stack for the AI features in your codebase with minimum effort. The aim is to remove the gruntwork often involved with evals by automating as much of it as possible, but in a principled way that you can actually trust. In practice, this means automatically creating the evaluation stack to make the behavior of your AI application transparent, explainable, and easier to optimize.

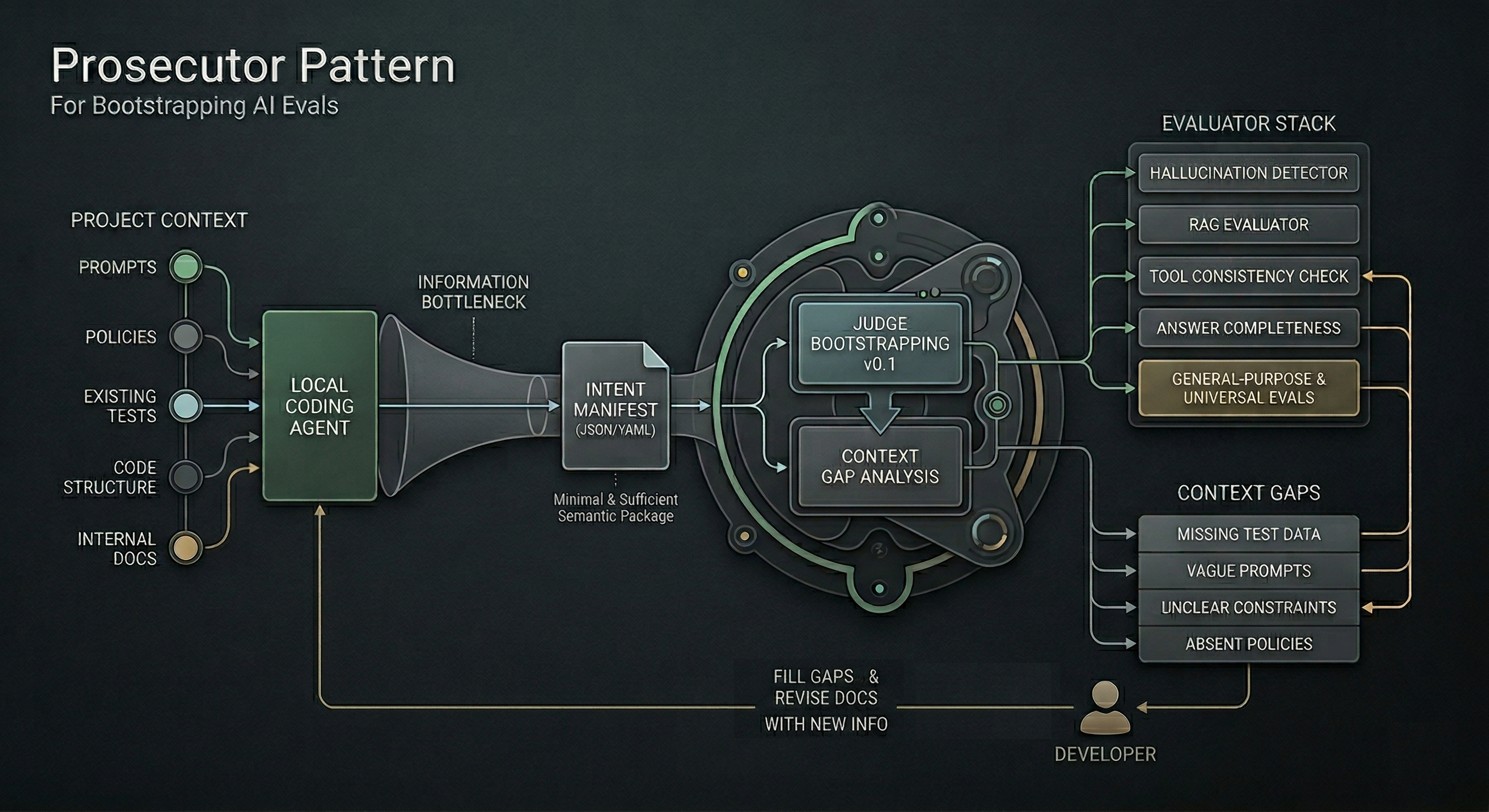

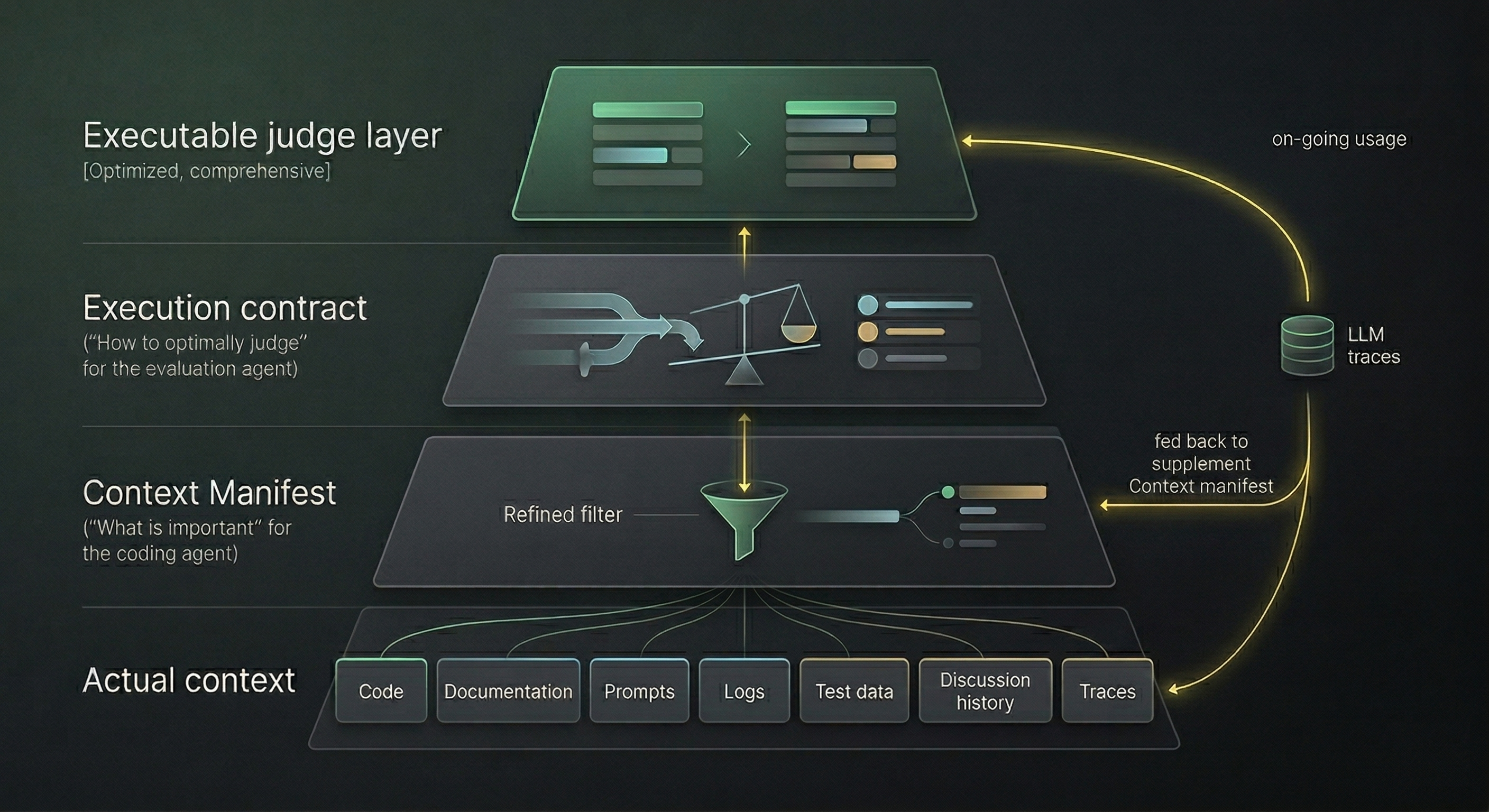

TL;DR: Your coding agent assembles a Context Manifest: a minimal, structured package of everything relevant to define the semantics of each non-deterministic (LLM) call in your application. Those semantics are often captured in side information such as prompts, code references, policies, examples, constraints, and documentation. A distinct meta evaluator then turns that manifest into an Execution Contract which defines what successful behavior looks like and how it should be judged, and compiles that into executable scorers. Your coding agent then integrates the scorers in proper locations in the code.

The protocol codifies a general pattern ("prosecutor - auditor - judges") that is implementation-agnostic. We have a reference implementation at Scorable.ai which has been in development since 2023, informed by seeing countless agent startup stacks and enterprise AI workflow automations.

Data-Driven Obsession Is Slow, Static Self-Evaluation Is Limited

A common protocol to build evals starts from observing issues in trails, which is robust but slow, limited, expensive, and hard to get started with. If your eval stack requires 50 labeled failures from the get-go before you can define anything useful, then early eval work is blocked on time, experts, and a lot of manual effort.

Another extreme is to ask Claude or whichever coding agent you wield to build the whole testing suite for the LLM calls in your codebase. It is then up to you to meta-evaluate the quality and coverage of an evaluation stack the agent designed for itself. What could possibly go wrong?

The problem with vibecoding the evaluation stack is three-fold:

- It is cognitively demanding for the coding agent to perfectly build both the system and the trust layer for that system. This means that the trust layer will often be a facade and sloppy.

- It is a lot of work for humans to verify how good that evaluation stack actually is. It does not make a lot of sense for everyone to create their own standard for how long is "1 meter".

- You will also need to create the hosting and orchestration stack, which introduces another dimension of unreliability and costs.

The Prosecutor Pattern to AI Evals

The solution is to leverage the local coding agent while leveraging a separate specialized agent to resolve the listed challenges.

Data-driven approach starts with the right idea: only production usage will show the true failure modes of your application. But it glosses over the most natural starting point for developers: all the context that you know about your application. Much of that context lives across prompts, policies, existing tests, code structure, internal docs, and the builder's own understanding of what each LLM call is supposed to do. Why should we ignore that?

We can leverage the coding agent to take advantage of this even with little or no existing trace data, and then iteratively improve the evaluation stack as more becomes available. This can be achieved with a "Prosecutor Pattern": Before you can leverage a judge, you need to build a case.

That "case" is a structured description of each LLM call in your codebase about:

- what this component seems intended to do and why

- what kinds of inputs it operates on

- what surrounding context is necessary to assess whether it succeeded or failed

- what constraints appear to apply

- what examples or traces exist

- what uncertainties or gaps are already visible.

Upon finding all that contextual content, the agent extracts a highly-scoped Context Manifest (JSON/YAML) detailing exactly what that specific call is supposed to do, under what constraints.

A reference implementation of that pattern is found in Scorable evaluation platform which any agent can connect to by simply pointing to the instruction file (SKILL.md).

The goal is to follow a least-information principle: Pass forward all the information needed to define and calibrate a good judge, but avoid the much more expensive and privacy-sensitive pattern of shipping everything and hoping a remote evaluator figures it out.

This effectively creates an information bottleneck which makes it easier for the meta evaluator agent to focus on the right things and less likely to overfit.

Scorable then uses that isolated manifest to bootstrap a dedicated v0.1 of your LLM judge. And yes, there are many powerful general-purpose evaluators to draw from, such as hallucination detectors, generally reusable semantic evaluators that capture repeating patterns, and community created evaluators.

Context Gaps Awareness Avoids Slop

However, while the code base alone will hint at the purpose it rarely contains comprehensive test data or enough consistent supporting information. For example, Scorable could infer a "policy adherence" evaluation is needed but might be missing the actual policy. In this case, Scorable would create slop-like evaluators that are "right kind" but cannot really operate.

If the system is aware of and identifies these gaps, the gap management can be treated as a feature, not a bug. The list of such gaps is then pushed back to the coding agent to figure out, and that agent in turn can pass them back to the developer as needed.

The coding agent then asks Scorable to revise the judges with the new information incorporated, etc.

The workflow looks like this:

- Your coding agent reads an instruction file from Scorable.

- That file tells the agent what to inspect in the project in order to identify LLM call sites and assemble a Context Manifest for each one, essentially creating a semantic map.

- The agent sends those manifests to Scorable.

- Scorable uses the manifest to create an Execution Contract: the general plan for creating the stack of necessary independent evaluators for the related LLM call, and assessment of gaps. Those evaluators are either created on the fly or picked from pre-optimized universal evaluators such as those for RAG stages evaluations, answer completeness, tool selection consistency, etc.

- Scorable also identifies context gaps: missing test data and coverage, vague prompts, unclear constraints, absent policies, wrong abstraction boundaries, or other missing pieces that prevent strong evaluation.

- Those gaps are returned to the coding agent or surfaced to the user.

- The agent or user can then fill them in and rerun the process.

Instead of asking a human to manually define the entire evaluation stack up front, the coding agent tries to recover the intent context, build the strongest judge it can from that intent, and then explicitly report what is still missing.

Why This Is a Good Way to Kickstart a Project

The advantages of this approach:

It optimizes user effort. No need to manually author every metric, rubric, or judge definition from scratch as in the classical approach, but also much less meta level thinking needed in the coding agent approach: The agent will ask you a lot of questions about how you actually want to test the LLM, and in the end, you really have to start digging into the approach to understand if it is testing it in the right way.

It is more privacy-preserving than sending the whole codebase over to another expert agent. The unit of handoff is the manifest, not the entire application context.

It separates concerns more cleanly. The coding agent remains responsible for the product logic, while the meta evaluator agent evaluation system takes responsibility for judge construction, orchestration, and iterative refinement.

It makes missing semantics visible. By forcing missing information into the open, the process often improves both the evals and the underlying prompts, tests, and task definitions.

The Courtroom Parable

To wrap everything up, think of the regular AI evaluation as putting the agent to court, suspected of various crimes: hallucinations, ignoring blocks of context, ignoring compliance because "I wasn't aware", etc.

The proposed pattern effectively guides the local coding agent to prepare the case for determining all the ways in which the LLM calls might be failing. This is where the "prosecutor" comes in. The judge then draws from their own general expertise - think of the model's pretraining - to render judgements in the proper context for the case. The judge should then also have the appropriate policy specifically for the situation. But the real action only begins once the code is called, and data flows through. The evaluator stack then resembles the jury (a stack of evaluator metrics) in assessing every aspect of the case, and the judge renders the final judgement for that data point: whether to let it through, or stop it, or try to remediate it, or escalate to the human.

Without prosecution building the case, the judgement will have to be overtly generic, as in "for cases like this, usually something like X is wrong". Without the full process, every trial will take place as if the crime happened the first time ever, leading to inconsistency and variance.

Known Limitations

- The ability of your agent to find all local relevant test data and recognize gaps in it correctly has yet to be tested in complex cases.

- If significant usage data is available but only via a 3rd party observability platform or logging system, leveraging this via the prosecutor pattern is not always robust, as the coding agent must mediate between the evaluation and observability systems.

- The pattern is predicated on an initial Context Manifest being directionally correct; if the initial assessment was wrong in a devious way, the 2 agents might not recover from this on the following iteration rounds.

FAQ

So this is MCP and skills for integrating. What's new? It is integration and semantic bootstrapping. The principle of passing forward a manifest that encapsulates validation context, in a manner of an information bottleneck, seems, though conceptually obvious in hindsight, different from existing paradigms in AI evals.

Why do you need this? Why not just give this prompt to Claude and ask it to make the whole thing? You can totally do that. But there are two problems. First, your mileage on the result may vary - this is a tall order if your codebase is large, because you are asking the agent to do intense meta level analysis. Even though Scorable has been painstakingly tuned for this, it still fails often. And yes it's likely using that same best-of-breed model you are. But the main problem is about the responsibility: Now you will maintain both your application, your test cases, your evaluation stack definitions, and responsibility for the judge calibration and orchestration.

What else is similar? You can find partially similar but not as comprehensive patterns in e.g. Sentry and Posthog integration scripts, and something adjacent in Hamel Husain's "evaluation skills".

Wouldn't it be easier to just share the whole codebase to the agent that does this? Maybe, but there is the privacy aspect, and you might have more than the codebase to go with.

You will get so much better judges with real data. This approach just leads to the "known knowns". If you have the real data already, then that should be the key part informing the Manifest! If the data has already been passed over to Scorable, it can be leveraged to refine the metrics in the judge later automatically.